[KL520] Converting tflite model to onnx

Hello Kneroners,

I'm trying to convert and upload a customized tflite model (Mediapipe) to KL520 dongle.

Here're the converting methods I've tried and get no luck so far.

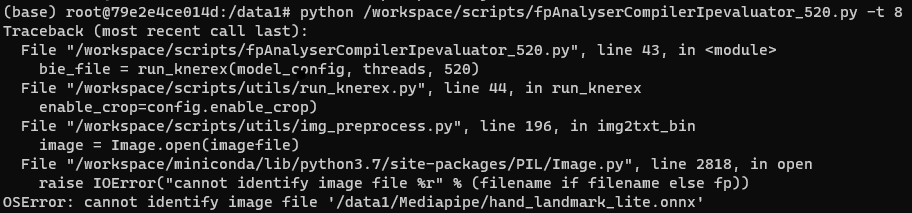

- Firstly I successfully convert tflite to onnx with tflite2onnx from github (https://github.com/zhenhuaw-me/tflite2onnx) but got an OSError while attempting to compile onnx with fpAnalyserCompilerIpevaluator_520.py - cannot identify image file

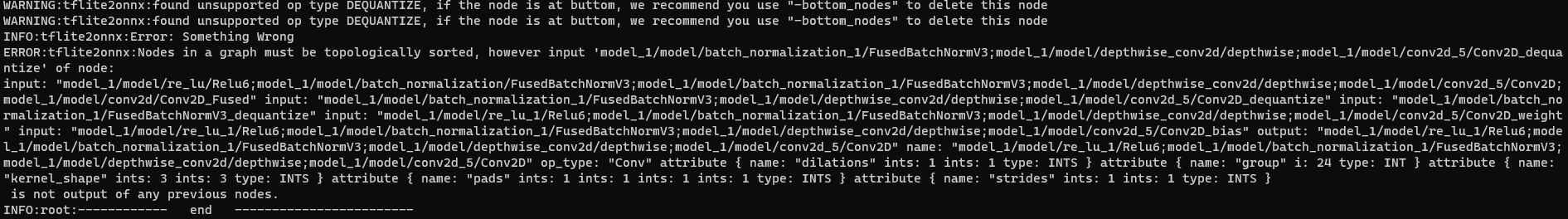

- Secondly I tried to convert tflite to onnx with the tool in Kneron's docker (python /workspace/libs/ONNX_Convertor/tflite-onnx/onnx_tflite/tflite2onnx.py -tflite ./hand_landmark_full.tflite -save_path ./hand_landmark_full.onnx -release_mode True) and got lots of WARNING and failed to create any file

May I have your suggestion or is there any document or discussion thread that I could refer to. Thank you.

BR, Wallace

The discussion has been closed due to inactivity. To continue with the topic, please feel free to post a new discussion.

Comments

Here's an update with 1st converting method.

I moved image files to a seperated folder and modified the "input_image_folder" parameter in "input_params.json" accordingly.

Seems there's a step forward and another error is reported:

"subprocess.CalledProcessError: Command '['/workspace/libs/fpAnalyser/updater/run_updater', '-i', '/workspace/.tmp/updater.json']' died with <Signals.SIGABRT: 6>. "

*The tflite and onnx files are uploaded for your reference.

Hello,

After keep failing on converting tflite to onnx, I gave up and turned back find some luck in yolov5.

I tried to convert an optimized onnx to nef and got some error messages regading length.

May I have your suggestion on this topic, thanks.

@Wallace Tseng

Hi Wallace,

Firstly, you got this error, maybe your image file folder is not on the correct path, or the input_paramas.json does not fill in correctly.

Secondly, Kneron's docker (

python /workspace/libs/ONNX_Convertor/tflite-onnx/onnx_tflite/tflite2onnx.py -tflite ./hand_landmark_full.tflite -save_path ./hand_landmark_full.onnx -release_mode True) got lots of warnings and failed to create any files. This problem has been reported to relevant colleagues, and we recommend using tf2onnx (https://github.com/onnx/tensorflow-onnx).@Wallace Tseng

Hi Wallace,

You use this "hand_landmark_lite.onyx" model and run Kneron's docker scripts

python /workspace/scripts/fpAnalyserCompilerIpevaluator_520.py -t 8get the "died with <Signals.SIGABRT: 6>" maybe you didn't optimize your hand_landmark_lite.onnx model; you need to run the ONNX to ONNX (ONNX optimization) to optimize your "hand_landmark_lite.onnx" model (https://doc.kneron.com/docs/#toolchain/appendix/converters/, 6 ONNX to ONNX (ONNX optimization)) before.By the way, the kl520 didn't support the sigmoid operator, so you need to cut this operator and on the host to do this process.

@Wallace Tseng

Hi Wallace,

The KL520 didn't support the Reshape and Mul operators, etc.

You can refer to the following website URL for the operator's support: https://doc.kneron.com/docs/#toolchain/appendix/operators/

Hello Andy,

Thanks for your feedback.

I successfully converted the tffile with "tf2onnx (https://github.com/onnx/tensorflow-onnx)" with opset v11 and had the output onnx file optimized. Seems I face the same situation with this thread (https://www.kneron.com/forum/discussion/comment/1037/#Comment_1037).

I also got a secondary onnx model which causes the error "Invalid program input: The onnx should have one valid opset definition" while converting to nef file.

Could you please share me the method to remove the second onnx model. Much appreciated.

BR, Wallace

@Wallace Tseng

Hi Wallace,

The picture you provided me is already a way to delete the redundant opset definition.

You just need to add

onnx.save(m, "input/your/path")to save the onnx. The code is as follows:import ktc import onnx m = onnx.load('aoi_opt.onnx') m.opset_import.pop() #remove opset definition print(m. opset_import) onnx. save(m, "input/your/path") #Save your modelOr may I have misunderstood your question?